The digital health landscape is currently defined by a paradox: while over 538 million wearables were shipped in 2024 alone, the data they collect remains trapped in incompatible silos. To address this “infrastructure challenge,” Anecoica Studio UG and the Good Tech Living Lab recently completed a successful real-world validation of A.R.I.A. (Affective Reasoning & Intelligent Adaptation). This collaboration, born from the EVOLVE2CARE Open Call, marks a critical shift in how we understand the body’s emotional context.

The API for Human Emotion

A.R.I.A. is not a consumer wellness app or a medical device; it is B2B infrastructure designed as a personalization API for wearable effect. It serves as a standardization layer that allows any wearable device to communicate with any application.

The core problem

The problem is the “labels problem”. Generic population models often reach high accuracy in labs (89.4%) but plummet to 56% in real-world conditions because biometric signals vary significantly between individuals. A.R.I.A.’s value lies in calibration, creating personalized models rather than relying on population averages.

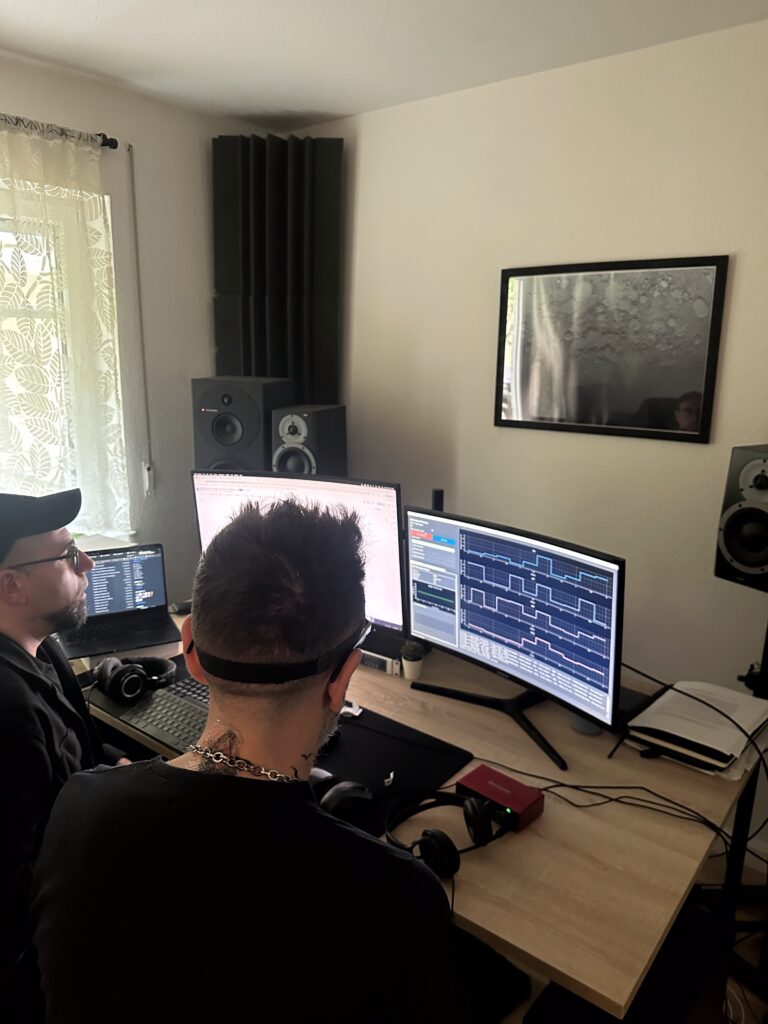

Moving from Lab to Life: TRL 4 Validation

On May 3rd, 2026, in Berlin, the project reached a major milestone by moving from TRL 3 (lab accuracy) to TRL 4 (real-world validation). The Living Lab pilot involved 10 healthy adult volunteers in a non-clinical exploratory study.

Using a “Wizard-of-Oz” configuration, the team tested how participants perceived AI-generated soundscapes calibrated to three experiential states: Energetic, Meditative, and Focus. Key observations from this pilot include:

- Emotional Safety: Participants consistently rated emotional safety at the highest levels, thanks to a neutral framing that eliminated discomfort risk.

- Perceptual Coherence: High levels of absorption were reported, indicating that the calibrated soundscapes effectively matched the intended mental states.

- Acceptability: Nearly all participants expressed a willingness to return for future sessions, a strong indicator of the system’s commercial viability.

The Roadmap to 2030

The Berlin pilot is just the first step in a multi-layered roadmap toward establishing a neutral infrastructure standard for the $25B biometric economy.

- Layer 1 (Current): Focuses on 3-class arousal detection (Stress, Baseline, Amusement) with a 5-minute calibration.

- Layer 2 (2027): Will introduce voice modality for richer emotional resolution.

- Layer 3 (2028+): Will implement Per-User Embedding Spaces, creating continuous, longitudinal models that serve as a personalized “moat” for users.

Conclusion

The EVOLVE2CARE partnership has successfully bridged cutting-edge German deep tech with Romanian research expertise. By focusing on calibration as the primary product, A.R.I.A. is poised to solve the 80% churn problem in digital health, where static apps fail because they cannot adapt to the individual.

How could a personalized, real-time understanding of your emotional state transform the apps you use every day?